How to write a type checker/type inferrer with good error messages

27 Jul 2022

This is an experimental type checker/inferer for a simple lambda calculus. All the source for this may be found on my Codeberg repository.

Description #

This is a type inference system for a little language. (Described below.) It uses a fusion of type inference algorithms from PLAI, ESP, and μKanren. (See Resources)

Broadly speaking, our type inference engine works by:

- generating typing constraints from the program

- solving those constraints

We’ll describe each of those in more detail.

Language description #

We implement a really simple language that includes features such as the following:

42 ; numeric literals

#t ; booleans

(let (x 1) (+ x 1)) ; single-variable let; binary math operators

(λ y (+ y 2)) ; single-argument anonymous functions

(let (id (λ x x)) (if (id #t) (id 2) (id 3))) ; let-polymorphism; conditionals

At time of writing, the let-polymorphism works though it’s still a little rough.

Type checking vs type inference #

Type checking a step in language implementation where type annotations supplied by the user are mechanically checked prior to compiling or execution. Any time when the checker can determine that a value of the wrong type flows to a place (e.g. a variable, argument to a function, etc) it is called a type error.

Type inference saves programmers from having to write out all type annotations. Most times (though not always) it is possible to infer what the type of a variable should be. Literal values are really easy, for example:

let foo = 42;

The variable foo clearly should have some kind of integer type. However, type inference is more powerful than just inferring variable types from their initial values; for example, consider this Rust snippet:

let add_1 = |x| x + 1; // (lambda x: x + 1) for you Python programmers

What should type should the variable x have? Well, we know that it gets passed to +, so definitely some numeric type. Although the programmer doesn’t explicitly annotate the parameter x with its type here, we can tell using information elsewhere in the program. This is the role of type inference.

Why do we care about type inference? #

Type inference saves us a lot of typing. Moreover, if we are trying to retrofit a type system onto an existing system that has a lot of code written in it already, it would be nice to not have to require users of the language to go back and annotate all their existing code. We can still report type errors as we find them—they would have been caught at runtime anyway—ideally, existing code should just work, and future code should turn out safer.

Constraint generation #

What are constraints? #

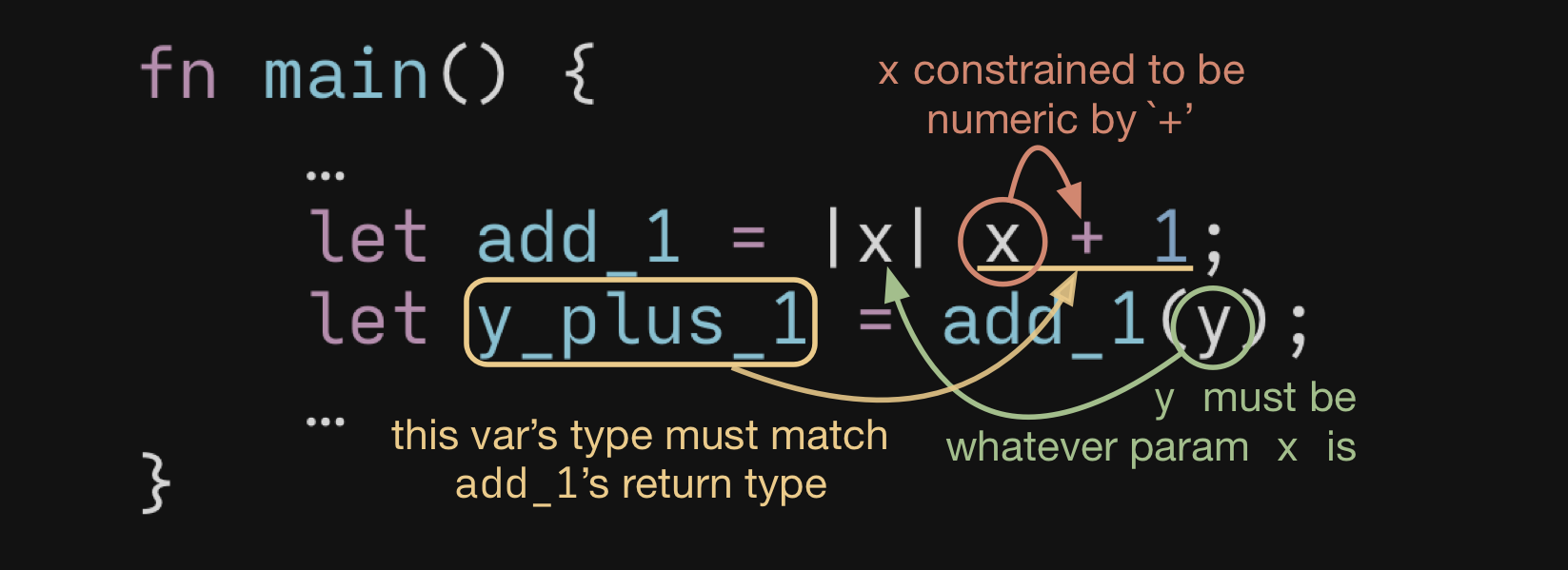

Constraints are statements about what how types and bits of a program relate to each other. For example, here is a little program with some constraints illustrated:

Figure 1: A little Rust program with some type relationships illustrated.

Even though none of the variables have explicit type annotations, we know that x must be some kind of number, add_1 is a function ℕ→ℕ, and y_plus_1 must be a number because it’s the same as the return value as add_1. Moreover, whatever y is, it has to match the input type of add_1 as well.

How do we generate constraints? #

At time of writing, we only have equality constraints, which state that some particular expression must have the same type as another type expression. Later we will likely add subtype constraints or union constraints which will involve some form of back-tracking.

Our algorithm walks through the AST of a program and emits a list of constraints on particular points of the AST. Please see one of the listed Resources for more details.

Most explanations (PLAI, EPL) of a type inference algorithm dump the generated constraints into a set. Here we diverge somewhat from the literature: we gather the constraints into a list, which keeps the constraints in rough order of when we encountered those constraints in the program. This ordering is important for good error generation later on.

We will likely play with how these constraints are ordered in the future.

A good excerpt from PLAI:

What are constraints? They are simply statements about the types of expressions. In addition, though the binding instances of variables are not expressions, we must calculate their types too (because a function requires both argument and return types). In general, what can we say about the type of an expression?

- That it is related to the type of some identifier.

- That it is related to the type of some other expression.

- That it is a number. [/Or in the case of this interpreter, that it is a boolean./]

- That it is a function, whose domain and range types are presumably further constrained.

Unification #

We use ideas from the unify algorithm in μKanren: we have a walk function along with a substitution list that we can modify non-destructively. This differs from how PLAI and EPL describe unify, which often does destructive replacement of variables in the substitution list.

I think this algorithm has the benefit of being a little simpler to understand, once the purpose of the walk function is grokked. It does mean that you must invoke (walk ast-chunk substitution-list) in order to find the type of the AST node.

See the infer-and-annotate and patch-annotations functions for a demonstration of how the substitution list along with the original tagged AST can be used to get the type for every node in the program.

Constructed types, or higher-order types #

Our simple language doesn’t have (yet) types like (listof ℕ), but it could if we wanted to let it. Use function calls as a model for how we would handle these cases. From PLAI:

We have used numbers as a stand-in for all form of base types; functions, similarly, stand for all constructed types, such as

listofandvectorof.

Error message generation #

Our error message generator is sensitive to the order in which type constraints are eliminated during the unification process: we generate the constraints in rough order of when the type of something would be encountered. E.g., when evaluated the form (+ 1 2), we generate the constraints for the literal values 1 and 2, then we generate the numerical type constraint that + imposes on its arguments.

This seems to do a pretty good job of giving us the information we need.

Extending the language #

Adding new forms to the language only involves modifying the constraint generation and error message production routines. (Along with a few ancillary functions like AST tagging etc.) The unify routine essentially stays the same.

When we add type unions we will have to modify unify to support some form of back-tracking. We will also have to make some modifications with let polymorphism.

Open Tasks #

- Basic type inference

- Decent error messages

-

ifandletforms - Type unions

-

let-polymorphism - Occurrence typing for handling nullable types

Resources #

- PLAI

- Programming Languages: Application and Interpretation, Krishnamurthi. See chapter 15 online.

- EPL

- Essentials of Programming Languages, Friedman and Wand. See Chapter 7.

- μKanren

- For the original paper, and implementation, and other resources, see my repo on Codeberg.